Navis

Guiding mobility through

intelligent robotics

Supporting safer, more confident mobility for blind and visually impaired users in unfamiliar environments through real-time obstacle detection and assistive robotics.

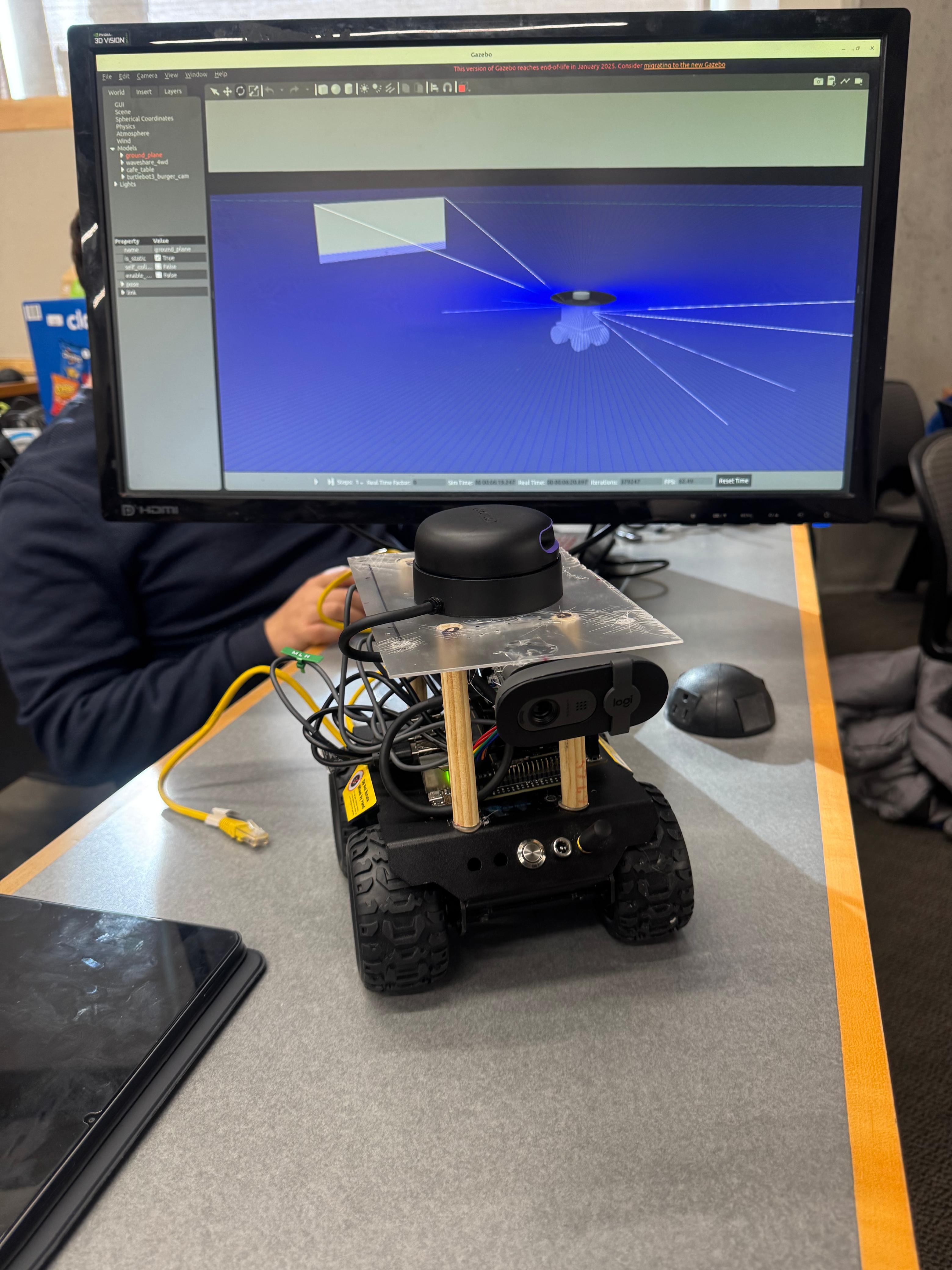

Prototype navigation setup

Front view of the robot with the simulator in the background.

Prototype demo

See it in action

Watch the prototype move and demonstrate an assistive navigation workflow in a real-world test scenario.

The problem

Mobility should not

depend on uncertainty

Navigating unfamiliar environments can be stressful and unsafe for blind and visually impaired individuals. Traditional mobility aids remain essential, but they may leave gaps in obstacle awareness and short-range environmental feedback that affect confidence and independence.

How it works

Detect. Decide.

Guide.

The robot detects nearby obstacles in real time, estimates safe navigation responses, and delivers accessible feedback designed to support screen-free guided mobility for blind and visually impaired users.

Why it matters

Guide dogs cost

over $20,000

A professionally trained guide dog can exceed $20,000 USD and availability is limited. This project explores an assistive robotics approach to provide scalable, affordable guidance support.

Impact

Built to support

independence

Our goal is to make assistive mobility more intelligent, responsive, and accessible through robotics and AI that adapt to real world conditions.

About us

Built at HackMerced

by Team Navis

Navis was developed during HackMerced as a prototype focused on assistive mobility for blind and visually impaired users. Our team combined robotics, obstacle detection, and accessible design to explore a practical navigation support system built in a hackathon setting.

Project links

Interested in Navis?

Explore the project repository and see how Team Navis approached assistive robotics, prototyping, and accessibility at HackMerced.